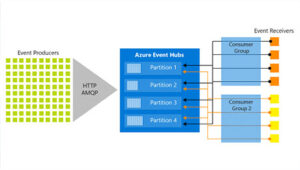

In our introductory blog post to Azure Sentinel and Event Hubs, we explained the basic functionality of Event Hubs and how they can help to address data ingestion challenges in large organizations. In this post, we’ll deep dive into what happens between the Event Hubs and Sentinel and give a more detailed view of how logs can be processed and filtered before being ingested to Sentinel. Such filtering capabilities can be required to limit the quantity of logs ingested to Sentinel and reduce costs.

Two possible methods to process logs will be discussed: Azure functions and Stream Analytics. These are however not the only solutions available and any system or process that is an Event Hub consumer can technically be used for such purpose.

Filtering Event Hubs logs

Azure Event Hubs are useful to gather large volumes of logs and distribute them between different consumers of these logs, but can be limited when logs needs to be further processed or filtered. If only a subset of the logs received is needed by the “end-user” of the logs (e.g. Sentinel), these logs would have to be filtered by another service acting as a middle-man between the Event and Sentinel. This is where Azure Functions and/of Azure Stream Analytics come into play.

Azure Functions

Azure Functions are serverless functions that can be created on a continually updated infrastructure managed by Microsoft. Azure functions can be used for any task that can be scripted, including sending email or notification, starting backups and processing events.

Azure Functions are an inexpensive option that can be rapidly deployed to ensure efficient data logging. The functions can be natively written in C#, Java, PowerShell, Python, or JavaScript. It is a simple to use yet powerful tool to process and filter logs before ingesting them into Sentinel.

An Azure Function deployed between an Event Hub and Sentinel would typically be composed of the following:

- An Event Hub trigger to start to function for each event or each batch of events received,

- A code section going through the logs to filter and/or enrich them,

- The code section sending the logs to Sentinel and its underlying Log Analytics Workspace using the Azure Monitor HTTP Data Collector API.

This can be a powerful solution when ingestion logs like AKS (Azure Kubernetes Service) or SQL audit logs as these can generate a large volume of events, some of which are not necessary for security monitoring.

Stream Analytics

Azure Stream Analytics is a real-time event-processing and analytics engine that can be directly connected to an Event Hub. The service makes it easy to ingest, process, and analyze streaming data from Azure Event Hubs, enabling powerful insights to drive real-time actions.

Stream Analytics does however not support outputting logs directly to a Log Analytics workspace (and therefore Sentinel), but it can be an alternative solution to Azure functions in some case such as storing logs in a storage for long term retention or create dashboards based on the logs processed by the Event Hub. The service might also be more suited for teams that are familiar with database queries as the Stream Analytics queries are based on SQL (but can be extended with JavaScript or C# user-defined functions.

Summary

The versatile nature of Azure Event Hubs also allows users to ingest logs from a wide range of sources, making it an attractive resource for legacy systems. Event Hubs also support Apache Kafka, simplifying the integration with non-Azure systems that can use this protocol (e.g. an ELK stack).

With the flexibility and capabilities provided by other services such as Azure Functions or Stream Analytics, logs ingested by the Event Hub can easily be enriched and/or filtered to ensure each consumer gets access to the logs it requires without being overloaded.

Although the default connection method for Event Hubs is via the public network, Event Hubs can be paired with Azure Virtual Networks to allow a privatized flow of data. This can be taken one step further and can be paired with an Express route to securely ingest data from a locked down On-Prem source.